The rapid expansion of artificial intelligence (AI) technology has thrust data centers into the spotlight as critical infrastructure supporting our increasingly digital world. Yet, beneath the surface of this technological marvel lies a significant and largely overlooked problem: the staggering energy demands required to keep these facilities operational. Currently, data centers consume over 4% of the United States’ electricity, with nearly half of that energy allocated solely to cooling systems. This reliance on traditional cooling methods presents a thorny paradox—the very infrastructure enabling innovation is fueled by unsustainable energy practices. If left unaddressed, this imbalance threatens to hinder future AI advancements and exacerbate environmental concerns.

The crux of the issue revolves around managing the immense heat generated by high-powered processors. Conventionally, air cooling or liquid cooling solutions are employed, but both methods have inherent limitations regarding efficiency and scalability. As AI models grow more complex and demand more computing power, the strain on cooling infrastructure becomes increasingly evident. The stakes are high: without radical innovation, we risk reaching the limits of our current cooling paradigms, stifling progress, and escalating environmental impact.

Innovative Approaches: The Promise of Two-Phase Cooling Technology

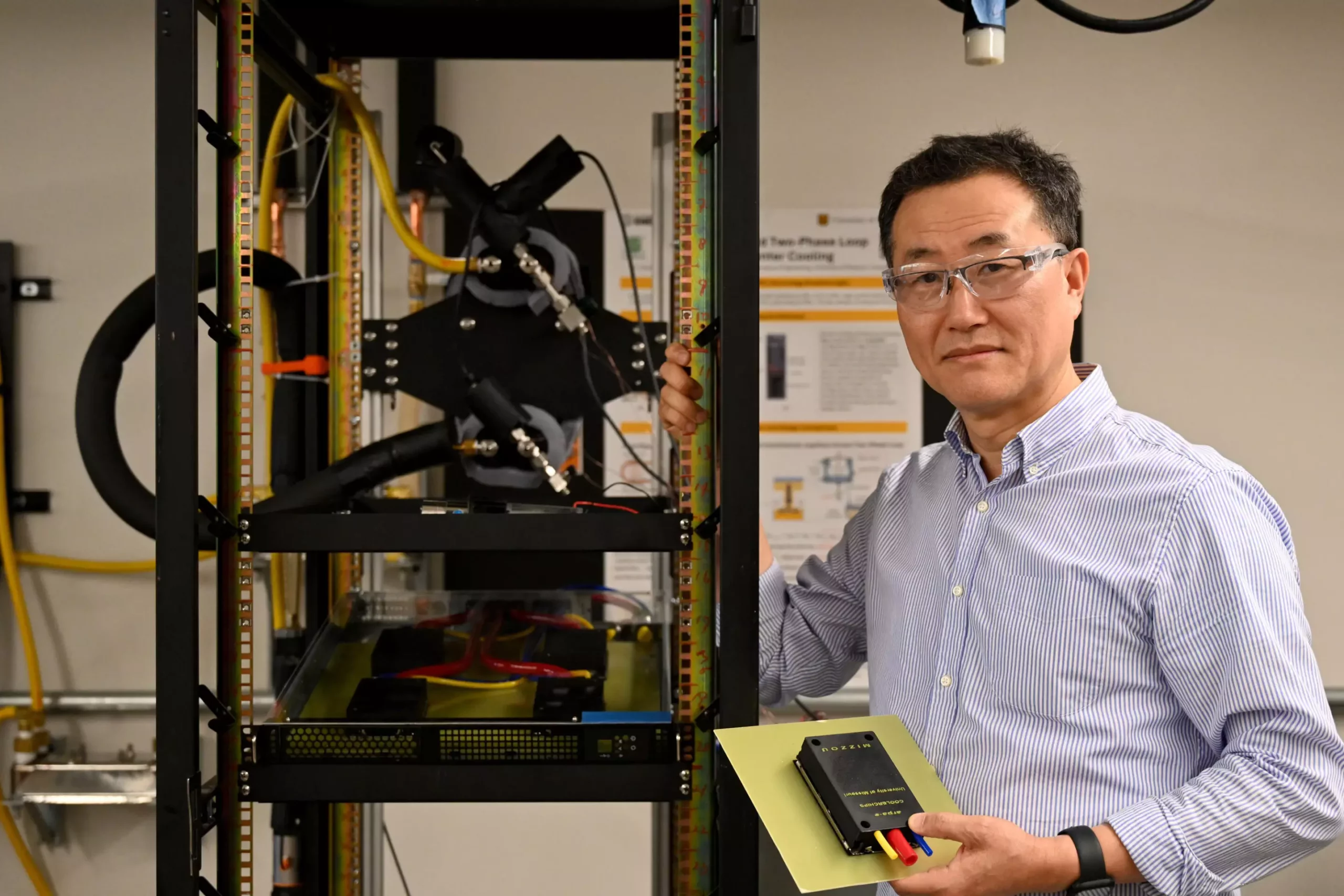

Enter the groundbreaking work by University of Missouri researcher Chanwoo Park, who is spearheading the development of a revolutionary two-phase cooling system aimed at addressing these mounting challenges. Unlike traditional methods, this technology employs phase change mechanisms—specifically, the controlled evaporation of a liquid into vapor—to transfer heat more efficiently. The system features a thin, porous metal surface where boiling occurs, allowing heat to be dissipated with remarkably low thermal resistance.

What makes this approach truly compelling is its passive operation; the cooling process can function without the continuous energy input that powered fans or liquid pumps require. When cooling demands are low, the system naturally adjusts, minimizing energy consumption. In situations warranting additional cooling, a mechanical pump activates to absorb residual heat, but even then, the energy expenditure remains negligible. Early tests suggest this innovation could slash energy requirements for cooling by a significant margin, creating a pathway toward more sustainable data centers that can meet the demands of future AI technologies.

This radical shift in cooling methodology does more than merely improve efficiency; it redefines what is possible within data center design. By integrating such systems directly into server racks, operators can greatly reduce their carbon footprint without sacrificing performance. It’s an elegant solution that aligns with the broader need to create environmentally responsible infrastructure capable of supporting the AI revolution.

Strategic Implications and Future Prospects

The significance of Park’s development extends beyond mere hardware improvements. It embodies a shift toward reimagining the very foundation of digital infrastructure—placing energy efficiency at the core of technological advancement. This innovation is synchronized with the broader goals of the Center for Energy Innovation, a multidisciplinary hub dedicated to solving complex energy challenges. The collaboration promises to generate not only advanced cooling solutions but also holistic strategies for optimizing energy use across the board.

Looking ahead, the real impact hinges on the adoption and scalability of these two-phase cooling systems. Park envisions their integration within data centers over the next decade, just as AI technology becomes omnipresent. The potential of these systems to delay or even eliminate capacity bottlenecks associated with traditional cooling paves the way for more sustainable and resilient digital ecosystems.

Crucially, this approach highlights a proactive stance against impending limitations. Rather than reacting to crisis-driven necessities, innovators are seeking to anticipate and stretch the boundaries of current technology—making the future of AI computing not only more powerful but also more environmentally conscious. There’s a palpable sense of optimism that such advancements will spark a wave of energy-efficient innovations across the industry, ultimately democratizing access to robust AI infrastructure while safeguarding the planet.

Pushing the envelope in cooling technology is not just an engineering challenge; it’s a moral imperative. As AI becomes integral to our everyday lives, the infrastructure supporting it must evolve into a model of sustainability. Through bold innovation like two-phase cooling systems, the industry can transform a significant energy sink into a symbol of responsible, forward-thinking progress—ushering in a new era of smart, clean, and efficient digital infrastructure.

Leave a Reply